|

Prof Graham Finlayson

University of East Anglia, United Kingdom

Biography: Graham Finlayson is a Professor of Computer Science at the University of East Anglia. He joined UEA in 1999 when he was awarded a full professorship at the age of 30. He was and remains the youngest ever professorial appointment at that institution. Graham trained in computer science first at the University of Strathclyde and then for his masters and doctoral degrees at Simon Fraser University where he was awarded a ‘Dean’s medal’ for the best PhD dissertation in his faculty. Prior to joining UEA, Graham was a lecturer at the University of York and then a founder and Reader at the Colour and Imaging Institute at the University of Derby. Professor Finlayson is interested in ‘Computing how we see’ and his research spans computer science (algorithms), engineering (embedded systems) and psychophysics (visual perception). He has published over 50 journal, over 200 referred conference papers and 25+ patents. He has won best paper prizes at several conferences including, “The 5th IS&T conference on Colour in Graphics, Imaging and Vision” (2010) and “the IEE conference on Visual Information Engineering” (1995). Many of Graham’s patents are implemented and used in commercial products including photo processing software, dedicated image processing hardware (ASICs) and in embedded camera software. Graham’s research is funded from a number of sources including government, industry and through investment for spin-out companies. Industrial partners include Apple, Hewlett Packard, Sony, Xerox, Unilever and Buhler-Sortex. Significantly, Graham was the first academic at UEA (in its 50 year history) to either raise venture capital investment for a spin-out company – Imsense Ltd developed technology to make pictures look better - or to make a money for the university when this company was subsequently sold to a blue chip industry major in 2010. In 2002, Graham was awarded the Philip Leverhulme prize for science and in 2008 a Royal Society-Wolfson Merit award. In 2009 the Royal Photographic Society presented graham with the Davies medal in recognition of his contributions to the Photographic Industry. The RPS made Graham a fellow in 2012. In recognition of distinguished service to the Society for Imaging Science and Technology, Graham was elected a fellow of that society in 2010. In January 2013 Graham was also elected to a fellowship of the Institute of Engineering Technology. Title: Color HomographyAbstract: Images of co-planar points in 3-dimensional space taken from different camera positions are a homography apart. Homographies are at the heart of geometric methods in computer vision and are used in geometric camera calibration, 3D reconstruction, stereo vision and image mosaicking among other tasks. In this talk I show the surprising result that homographies are the apposite tool for relating image colors of the same scene when the capture conditions – illumination color, shading and device – change. Three applications of color homographies are investigated. First, I show that color calibration is correctly formulated as a homography problem. Second, I compare the chromaticity distributions of an image of colorful objects to a database of object chromaticity distributions using homography matching. In the color transfer problem, the colors in one image are mapped so that the resulting image color style matches that of a target image. I will show that a natural image color transfer can be reinterpreted as a color homography mapping. Experiments demonstrate that solving the color homography problem leads to more accurate calibration, improved color-based object recognition, and we present a new direction for developing natural color transfer algorithms. |

|

Prof Jianmin Jiang

Shenzhen University, China

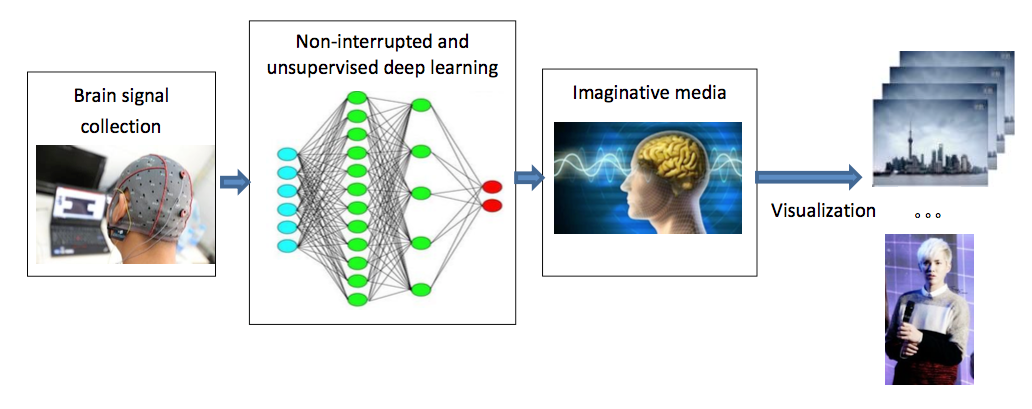

Biography: Jianmin Jiang received PhD from the University of Nottingham, UK, in 1994, after which he joined Loughborough University, UK, as a lecturer of computer science. From 1997 to 2001, he worked as a full professor (Chair) of Computing at the University of Glamorgan, Wales, UK. In 2002, he joined the University of Bradford, UK, as a Chair Professor of Digital Media and Director of Digital Media & Systems Research Institute. He worked at the University of Surrey, UK, as a chair professor in media computing during 2010-2015 and a distinguished professor (1000-plan) at Tianjin University, China, during 2010-2013. He is currently a Distinguished Professor and director of the Research Institute for Future Media Computing at the College of Computer Science & Software Engineering, Shenzhen University, China. He was a chartered engineer, fellow of IET (IEE), fellow of RSA, member of EPSRC College in the UK. He also served the European Commission as proposal evaluator, project auditor, and NoE/IP hearing panel expert under both EU Framework-6 and Framework-7 programmes. He was one of the contributing authors for EU Framework-7 Work-Programme in 2013. He is currently serving the Image and Vision Computing Journal, ELSEVIER, as an associate editor. Title: See What you think: Exploring a new concept of imaginative mediaAbstract: In this talk, a new concept of imaginative media is to be introduced and explored to see if it could be developed into a feasible yet adventurous research project with high impact and significant expectations. Given the fact that whenever we read novels, the scenes, the events, and the characters will start to connect together and be visualized inside our brain via our imaginations. As a result, we are naturally moved or immersed into the stories even without the rehearsal play back of the stories in the form of videos or shows on the stage of a theatre. Along with the extensive research and enormous advancement across the areas of digital media, computer vision, computer graphics, virtual reality, machine learning, and computational neuroscience, new research can be initiated to learn from those collected brain signals and capture activities reflecting the imaginations inside brains. Computerized media production can then be further researched to visualize those imaginative scenes, events and stories to complete the adventurous exploration of the new concept: imaginative media. A systematic view of such exploration is given in the following diagram. Details of its possible research as well as challenges will be discussed, and suggestions and critiques are also welcome.

|

|

A/Prof Manoranjan Paul

Charles Sturt University, Australia

Biography: Manoranjan Paul received B.Sc.Eng. (Hons.) degree in Computer Science and Engineering from Bangladesh University of Engineering and Technology (BUET), in 1997 and PhD degree from Monash University, Australia in 2005. He was a Post-Doctoral Research Fellow in the University of New South Wales from 2005 to 2006, Monash University from 2006 to 2009, and Nanyang Technological University from 2009 to 2011. Currently he is an Associate Professor and Director of E-Health Research at Charles Sturt University (CSU). His major research interests are in the field of Data Science such as Data Compression, Video Technology, Computer Vision, E-Health, Hyperspectral Imaging, Medical Imaging, and Medical Signal Processing. He has published more than 140 refereed publications. He was an invited keynote speaker in DICTA 2017 & 2013, CWCN 2017, IEEE WoWMoM 2014, and IEEE ICCIT 2010. A/Prof Paul is a Senior Member of the IEEE and ACS (Australian computer Society). He has served as a guest editor of five issues of Journal of Multimedia and Journal of Computers. Currently A/Prof Paul is an Associate Editor of EURASIP Journal on Advances in Signal Processing. He was a Program Chair of PSIVT 2017 and Publicity Chair of IEEE DICTA 2016. He is the ICT Researcher of the Year 2017 (Finalist) selected by Australian Computer Society. He obtained Research Excellence Supervision Award 2015 and Research Excellence Award 2013 at Faculty level, CSU. He also obtained Research Excellence Awards 2017 and 2011 at School level. He obtained more than $15M competitive grant money including the most prestigious Australian Research Council (ARC) Discovery Project Grant, Cybersecurity CRC. Title: Video data compression, processing and evaluation with human feedback in the loopAbstract: Video has the highest capability to engage human-being compared to any other media. It is processed by the brain 60,000 times faster than text. CISCO predicts that 80% of global Internet consumption will be video content by 2019 and 75% of mobile traffic will be video by 2020. This information tells us the importance of the video data in our daily life. To get benefit of the video data, we are facing a number of challenges: (i) how we tackling the huge volume of video data as some applications require ultra-compression for transmission, (ii) how we extract important information from the video data, and (iii) how we evaluate the quality of compressed/extracted end products. The coding, computer vision and quality experts have carried out research in these areas mostly in isolation. For example, computer vision researchers have mainly concentrated on various analysis techniques without giving much attention to the quality of the videos that are directly used from the video capturing devices in raw formats. Video coding researchers, on the other hand, have concentrated on extreme compression in the face of limited communication bandwidth without caring much about the end users’ receiving somehow distorted lossy images. Quality researchers have concentrated on evaluating the end product of videos without providing enough human perceived feedback for video processing/understanding. For high performance we need to integrate the knowledge from computer vision, coding, and human-computer interaction. This need arises due to the recent expansion of new technologies such as augmented/virtual/mixed reality, CCTV cameras, mobile devices, wireless communication, IoT, eye tracking technology, devices for capturing brain signals e.g. EEG, YouTube, etc. In this talk I like to highlight of our recent contributions to address the above mentioned challenges. More specifically, the contributions are in the area of vision-aided video coding, virtual view synthesis, multi/free viewpoint video, video summarization with eye tracking & EEG, and quality assessment with eye tracking technology. |

|

Prof Svetha Venkatesh

Australian Laureate Fellow

Alfred Deakin Professor, Deakin University, Australia

Biography: Svetha Venkatesh is an ARC Australian Laureate Fellow, Alfred Deakin Professor and Director of Centre for Pattern Recognition and Data Analytics (PRaDA) at Deakin University. She was elected a Fellow of the International Association of Pattern Recognition in 2004 for contributions to formulation and extraction of semantics in multimedia data, and a Fellow of the Australian Academy of Technological Sciences and Engineering in 2006. In 2017, Professor Venkatesh was appointed an Australian Laureate Fellow, the highest individual award the Australian Research Council can bestow. Professor Venkatesh and her team have tackled a wide range of problems of societal significance, including the critical areas of autism, security and aged care. The outcomes have impacted the community and evolved into publications, patents, tools and spin-off companies. This includes 554 publications, 3 full patents, 3 start-up companies (iCetana.com, Virtual Observer.com) and a significant product (TOBY Playpad). Professor Venkatesh has tackled complex pattern recognition tasks by drawing inspiration and models from widely diverse disciplines, integrating them into rigorous computational models and innovative algorithms. Her main contributions have been in the development of theoretical frameworks and novel applications for analyzing large scale, multimedia data. This includes development of several Bayesian parametric and non-parametric models, solving fundamental problems in processing multiple channel, multi-modal temporal and spatial data. Title: Delivering Efficiencies in Health Care and ManufacturingAbstract: This talk considers what to do when confronted with failures with current data or analysis. What can we do when current predictions for rare events are poor? Instead of focusing on rare event classification, for example, suicide prediction, we focus on identifying the riskiest events with minimal error. Such events are likely precursors to outliers of interest. We demonstrate our results through outlier detection in surveillance (leading to our start-up company iCetana, Australia) and in suicide risk prediction (implemented in in Barwon Health, Geelong, Australia). We discuss the challenges in data modeling, pitfalls and our outcomes. When data has special characteristics? We predict cancer toxicity risk, and show how we leverage the special characteristics of the data to build better predictive models. We share our insights we have learnt in our path from such data to models. When data is limited? We use Bayesian optimisation based methods to demonstrate how to accelerate the experimental process, the foundation of both product and process design. We show how we have been able to impact the discovery of novel materials and alloys. |